Scrolling through your favorite Nigerian forum can feel lively, but things get messy when hate speech or misinformation pops up. Online content moderation steps in as the quiet force behind safer, more enjoyable digital spaces. By monitoring and managing posts, platforms protect users while balancing freedom of expression. This introduction unpacks how moderation works, why it matters for Nigerian communities, and the different methods platforms use to keep conversations respectful. Understanding these details helps you make smarter choices and supports healthier online environments.

Table of Contents

- Defining Online Content Moderation and Its Purpose

- Types of Moderation: Human, Automated, Hybrid Models

- How Moderation Works on Digital Platforms

- Legal Duties, Risks, and User Responsibilities

- Balancing Free Expression and Online Safety

Key Takeaways

| Point | Details |

|---|---|

| Importance of Moderation | Effective content moderation prevents the spread of harmful material and misinformation, ensuring safe online communities. |

| Balance of Safety and Expression | Platforms must navigate the tension between protecting users from harm and allowing free speech, especially in diverse cultural contexts. |

| Role of Users | Users participate in moderation by reporting inappropriate content, which helps shape community standards and holds platforms accountable. |

| Types of Moderation | Hybrid moderation models that combine human and automated systems leverage the strengths of both to enhance content safety and accuracy. |

Defining Online Content Moderation and Its Purpose

Online content moderation sounds technical, but it’s simply how digital platforms keep spaces safe and functional. Think of it like a community manager for your favorite Naijatipsland forum—someone watching conversations, flagging problems, and enforcing house rules.

What exactly is content moderation? Monitoring and managing user-generated content involves evaluating posts, comments, images, and videos to ensure they meet community standards and legal requirements. Moderators—whether human staff, automated systems, or a mix of both—catch harmful material before it spreads.

The process covers more than just deletion. Platforms use diverse moderation techniques including filtering, ordering (how content appears), enhancing visibility of quality posts, and removing violations entirely.

Why Moderation Matters for Nigerian Online Communities

You’ve probably seen what happens when moderation fails. Misinformation spreads like wildfire, hate speech targets specific groups, and respectful discussions devolve into chaos. Moderation prevents this.

Key purposes of content moderation include:

- Preventing harmful or illegal content from spreading

- Protecting users from hate speech, abuse, and threats

- Stopping misinformation that could influence important decisions

- Creating spaces where diverse voices feel safe participating

- Ensuring compliance with Nigerian and international laws

On platforms like Naijatipsland, where political commentary and entertainment discussions thrive, moderation balances two competing needs. You want freedom to share your opinions without censorship. But you also want protection from harassment and false claims that damage reputations.

How Moderation Shapes Your Experience

Moderation directly affects what you see and who participates. When it’s effective, discussions stay focused and civil. Users feel confident posting without fear of abuse. When it’s weak, toxic behavior drives away thoughtful participants, and misinformation drowns out facts.

The actors involved extend beyond platform staff alone:

- Platform moderators and content reviewers

- Automated systems and artificial intelligence

- Users reporting violations

- Non-governmental organizations monitoring online safety

- Government regulators setting legal boundaries

Each actor brings different perspectives and priorities. A moderator in Lagos, a user in Abuja, and a civil rights group in Accra might all view the same post differently.

The Balance Between Safety and Expression

Moderation exists within tension. Platforms must protect people while respecting freedom of speech—a principle many Nigerians value deeply. Different cultures, laws, and communities define “harmful” differently. What’s illegal in Nigeria might be legal elsewhere, and vice versa.

Your role matters here. When you report content as inappropriate, you’re participating in moderation. When you comment thoughtfully instead of attacking someone, you’re helping create healthier spaces.

Pro tip: Before sharing strong opinions on controversial topics, consider how your words affect others in the community and whether you’re adding substance to the discussion or just inflaming tensions.

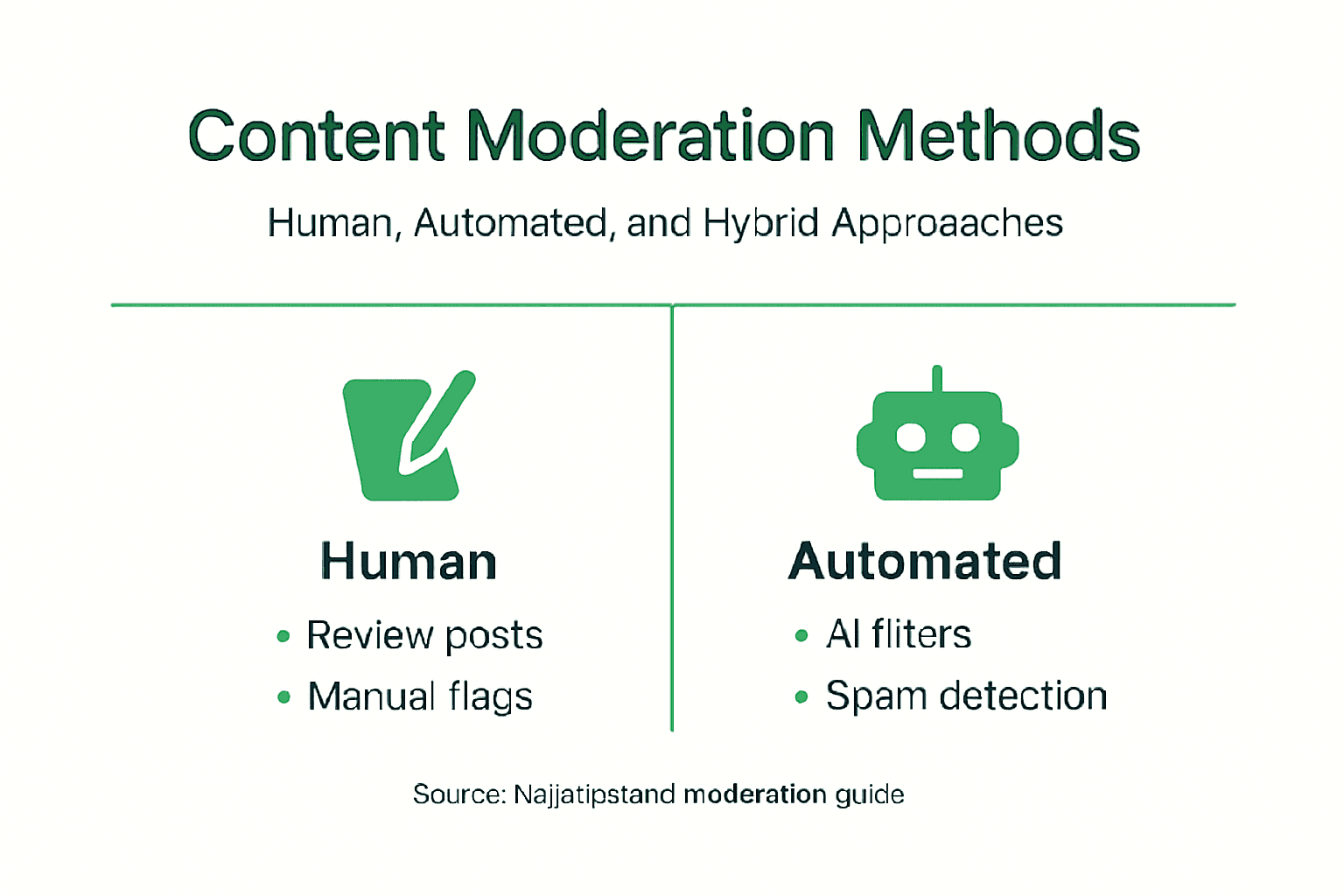

Types of Moderation: Human, Automated, Hybrid Models

Platforms don’t rely on just one approach to keep content safe. Instead, they mix human judgment, artificial intelligence, and hybrid systems to catch problems at different scales. Understanding these methods helps you see why moderation decisions get made the way they do.

Human Moderation: The Personal Touch

Human moderators are real people reviewing content. They read your comments, watch your videos, and make judgment calls about what stays and what goes.

Human moderation strengths:

- Understands context and cultural nuances that AI misses

- Catches sarcasm, irony, and local slang accurately

- Handles complex cases requiring interpretation

- Adapts to new trends and emerging harms quickly

But there’s a cost. Hiring trained moderators is expensive, and reviewing thousands of posts daily takes a psychological toll. Moderators regularly see disturbing content, hate speech, and violent material. This emotional burden contributes to burnout and high turnover.

Automated Moderation: Speed at Scale

Automated systems using AI scan massive volumes of content instantly. They filter obvious violations—spam, nudity, explicit violence—in seconds without breaking a sweat.

Automated moderation advantages:

- Processes millions of posts daily

- Operates 24/7 without fatigue

- Costs far less per item reviewed

- Catches pattern-based violations consistently

The problem? AI struggles with context. A post criticizing government corruption might flag as “political speech” incorrectly. A joke about hunger could trigger a misinformation flag. Bias in training data means systems often mishandle content from minority communities.

Automated systems excel at volume but fail at nuance. Human moderators excel at nuance but can’t handle volume. Neither works alone.

Hybrid Models: The Smart Combination

Hybrid moderation combines AI and human review to maximize both strengths. AI screens content first, flagging potential violations automatically. Then human moderators review the flagged items for accuracy.

How hybrid systems work:

- AI scans all incoming content instantly

- System flags borderline cases for human review

- Moderators make final decisions on complex posts

- Humans focus only on difficult cases, not routine ones

This approach solves real problems. Your report gets reviewed faster. Moderators spend energy on cases requiring judgment instead of obvious spam. False positives drop significantly because humans catch AI mistakes.

Nigerian platforms benefit especially from hybrid models. Content mixing Pidgin English, local references, and cultural context confuses AI systems. Human moderators from Nigeria understand these nuances immediately.

Here’s a summary comparing human, automated, and hybrid content moderation methods:

| Moderation Method | Main Strength | Key Limitation | Best Use Case |

|---|---|---|---|

| Human | Contextual judgment | Expensive, slow at scale | Nuanced or sensitive content |

| Automated | High speed, large scale | Lacks cultural context, can misinterpret | Obvious violations, bulk content |

| Hybrid | Combines speed and nuance | Requires ongoing management | Large platforms with diverse users |

Pro tip: When you report content, provide context in your report rather than just clicking “Report.” Moderators reviewing your flag will make better decisions when they understand why you found it problematic.

How Moderation Works on Digital Platforms

Moderation isn’t random. Platforms follow structured processes designed to catch problems consistently, treat users fairly, and protect rights. Understanding the workflow helps you see why your reported post gets reviewed the way it does.

The Detection Phase

Content reaches moderators through two main channels. First, algorithms scan everything automatically, flagging posts that match known violation patterns. Second, you and other users report content directly when something feels wrong.

Detection methods include:

- Automated keyword scanning for known harmful terms

- Image recognition identifying banned symbols or content

- Pattern matching spotting coordinated inauthentic behavior

- User reports flagging context AI can’t catch alone

On platforms like Naijatipsland, reports come constantly. Someone flags a comment as hate speech. Another user reports misinformation about elections. A third reports a scam link. All of these enter the moderation queue waiting for review.

The Review and Decision Process

Moderation processes include detection, review, and decision phases structured to balance efficiency with fairness. When content gets flagged, moderators examine it against community standards and legal requirements.

Reviewers ask specific questions:

- Does this violate our community standards?

- Is this illegal in Nigeria or where the user is located?

- What’s the context? Is this political satire or actual hate speech?

- Should this be removed, hidden, or left alone?

Different violations get different responses. Clear spam gets deleted immediately. Hate speech gets removed and may result in account suspension. Misinformation might get labeled with a warning instead of removed. The moderator’s judgment shapes your experience directly.

What Happens After a Decision

Moderation on platforms encompasses policies and appeals mechanisms giving you recourse when decisions feel wrong. If your post gets removed, you typically receive a notice explaining why and your right to appeal.

The process after a decision:

- Action taken (content removed, hidden, or account restricted)

- Notification sent to you explaining the reason

- Appeal option provided if you disagree

- Appeals reviewed by different moderators

- Final decision communicated to you

Moderation works best when platforms explain decisions clearly and let users challenge mistakes. Opacity breeds frustration and distrust.

Nigerian users particularly benefit from this transparency. When a moderator removes your post, you deserve to know specifically what rule it broke. Appeals ensure one person’s judgment doesn’t become final authority.

Below is a quick reference for typical moderation process steps and their impact on user experience:

| Step | What Happens | User Impact |

|---|---|---|

| Detection | Content flagged by AI or users | Potential delays before content shows |

| Review & Decision | Moderators assess content | Users informed of outcome |

| Action & Notification | Content acted on and user notified | Clarity about rule violations |

| Appeal | User can challenge decision | Greater trust and accountability |

User Participation in Moderation

You’re not passive in this system. Your reports directly influence what moderators review. Community members who flag content consistently help shape the platform’s safety standards over time.

Pro tip: When reporting problematic content, include specific details about why you find it harmful rather than vague descriptions—moderators reviewing your report will prioritize cases with clear context.

Legal Duties, Risks, and User Responsibilities

Content moderation isn’t just about keeping platforms pleasant. It’s legally required. Platforms face real consequences for failing to moderate, and you face consequences for what you post. Understanding these legal realities protects both you and the communities you participate in.

What Platforms Must Do Legally

Platforms operating in Nigeria must comply with Nigerian law. That means removing or restricting illegal content including hate speech, child exploitation material, and content inciting violence. They also must respect data protection rights and provide transparency about how they moderate.

Legal responsibilities in content moderation require platforms to monitor actively, remove prohibited content promptly, and document their decisions. Failing to act exposes platforms to lawsuits and regulatory penalties. But over-moderating creates its own legal risks—removing legitimate speech can violate freedom of expression rights.

Platforms must balance these competing demands:

- Removing genuinely harmful or illegal content quickly

- Protecting users’ right to express political and social opinions

- Providing clear explanations when content gets removed

- Allowing users to appeal moderation decisions

Naijatipsland, like all platforms operating here, must follow Nigerian cybercrime laws and content regulations. That shapes every moderation decision made on the site.

Your Legal Responsibilities as a User

You’re not just a passive observer. When you post, comment, or share, you bear legal responsibility for that content. Content moderation involves user accountability for violating platform policies and breaking laws.

What you cannot legally post:

- Hate speech targeting people by ethnicity, religion, or gender

- Misinformation designed to incite violence or undermine elections

- Child sexual abuse material or child exploitation content

- Defamatory statements damaging someone’s reputation falsely

- Copyright-infringing material or stolen intellectual property

- Threats, harassment, or coordinated abuse campaigns

Violating these rules doesn’t just get your content removed. You could face civil lawsuits from people you defame, criminal charges for hate speech or incitement, or account suspension affecting your ability to participate online.

The Evolving Legal Landscape

Laws governing content moderation are changing rapidly globally and in Nigeria. Legal frameworks increasingly require transparency and appeals mechanisms protecting your rights when decisions feel wrong. You now have more power to challenge moderation, but platforms face greater accountability too.

Your freedom to speak comes with responsibility. What you post affects real people and can have legal consequences for you.

Nigerian regulations are developing. Stay aware of how laws affecting online speech change. Following platform community standards protects you even when laws remain unclear.

Pro tip: Before posting controversial content, ask yourself if it breaks laws against incitement, defamation, or hate speech—platforms will remove it regardless of your intent if it violates these legal lines.

Balancing Free Expression and Online Safety

Here’s the tension: you want to speak freely without government or platforms silencing you. But you also want protection from abuse, misinformation, and hate. These goals sometimes conflict. Finding the balance shapes what moderation looks like everywhere, including Nigeria.

The Core Problem

Platforms sit between two opposing forces. Users demand unrestricted speech rights. Regulators demand protection from harmful content. These demands don’t always align. A post criticizing government is legitimate speech in a democracy but could be flagged as dangerous by overzealous moderators.

Regulators worldwide face balancing free speech and online safety with frameworks varying dramatically by country. The United States emphasizes strong censorship protections. The European Union permits greater intervention to prevent harm. Nigeria develops its own approach, influenced by both models but shaped by local values.

The stakes matter. Over-moderation kills democratic discourse. Under-moderation enables harassment and misinformation. Platforms must navigate this minefield constantly.

Why This Balance Matters for Nigeria

Nigerians value free speech deeply after decades of restricted media. You want to discuss politics, criticize leaders, and share opinions without fear. At the same time, you’ve seen misinformation cause real harm—election violence sparked by false claims, communities torn apart by hate speech.

The balance looks different here than elsewhere:

- Political speech gets stronger protection than in some countries

- Religious and ethnic sensitivities require careful moderation

- Misinformation about elections faces stricter scrutiny

- Satire and social commentary require moderator judgment

On Naijatipsland, this plays out constantly. Someone posts sharp criticism of government policy—that stays. Someone incites violence against a religious group—that goes. Someone shares a satirical political cartoon—moderators must decide if it’s speech or hate.

What Proportional Moderation Means

Regulations emphasize reasonable restrictions protecting users without unduly limiting speech. Proportional moderation means the response matches the actual harm. Spam gets removed quietly. Hate speech gets removed with explanation. Political speech stays even if offensive.

Key principles guiding this balance:

- Clarity: Rules must be clear so you know what’s prohibited

- Transparency: Platforms must explain why content got removed

- Appeals: You must have recourse when moderation feels wrong

- Proportionality: Punishments should match violations

- Democratic values: Speech central to democracy gets stronger protection

The hardest moderation decisions involve speech that’s offensive but not illegal. That’s where the balance gets tested.

Moderators must distinguish between speech that bothers people and speech that causes real harm. Your opinion on a political candidate might offend someone, but it’s protected speech. Coordinated harassment targeting someone for their identity crosses the line.

Your Role in This Balance

You’re not just affected by this balance—you shape it. When you report content, you help platforms understand community standards. When you engage respectfully instead of attacking, you demonstrate that free speech can coexist with civility. When you appeal unfair moderation decisions, you hold platforms accountable.

Responsible participation means:

- Speaking freely while respecting others’ dignity

- Reporting genuine harm instead of minor disagreements

- Accepting moderation mistakes as inevitable in complex systems

- Engaging with different viewpoints rather than silencing them

Pro tip: Question whether content genuinely harms people or simply offends you before reporting—moderators prioritize actual harm, and habitual false reporting damages platform trust.

Safeguard Your Online Experience with Trusted Moderation on Naijatipsland

Navigating the challenges of online content moderation is essential in today’s digital world. As the article highlights, the struggle to balance free expression with safety and truthfulness can be frustrating, especially when misinformation, hate speech, or harassment threaten the quality of conversations. At Naijatipsland, we understand these obstacles and are committed to creating a community where Nigerians feel both empowered to speak and protected from online harm. Our platform brings together thoughtful human moderation and community participation to ensure that discussions stay respectful, relevant, and informative.

Join thousands of Nigerian internet users actively engaging in a safe environment that respects cultural nuances and legal standards. Check out our user-generated content section to participate responsibly and experience moderation that values your voice. Visit Naijatipsland today and become part of a vibrant, secure online community where your opinions matter and quality discussion thrives.

Frequently Asked Questions

What is online content moderation?

Online content moderation is the process of monitoring and managing user-generated content on digital platforms to ensure it adheres to community standards and legal requirements. This includes evaluating posts, comments, images, and videos to prevent harmful or illegal content from spreading.

Why is content moderation important for online communities?

Content moderation is crucial for online communities as it helps prevent the spread of hate speech, misinformation, and abuse. It creates a safe environment for users to participate in discussions and ensures compliance with legal standards, fostering respectful and constructive conversations.

What are the different types of moderation methods?

There are three primary types of moderation methods: human moderation, automated moderation, and hybrid models. Human moderation involves real people making judgment calls; automated moderation uses AI to filter content quickly, while hybrid models combine both approaches to maximize efficiency and accuracy.

How do users participate in the content moderation process?

Users participate in content moderation by reporting inappropriate content. This helps moderators prioritize cases that require review and allows the community to shape the platform’s safety standards over time.